1,500 PRs in 5 Months — The OpenAI Harness Benchmark

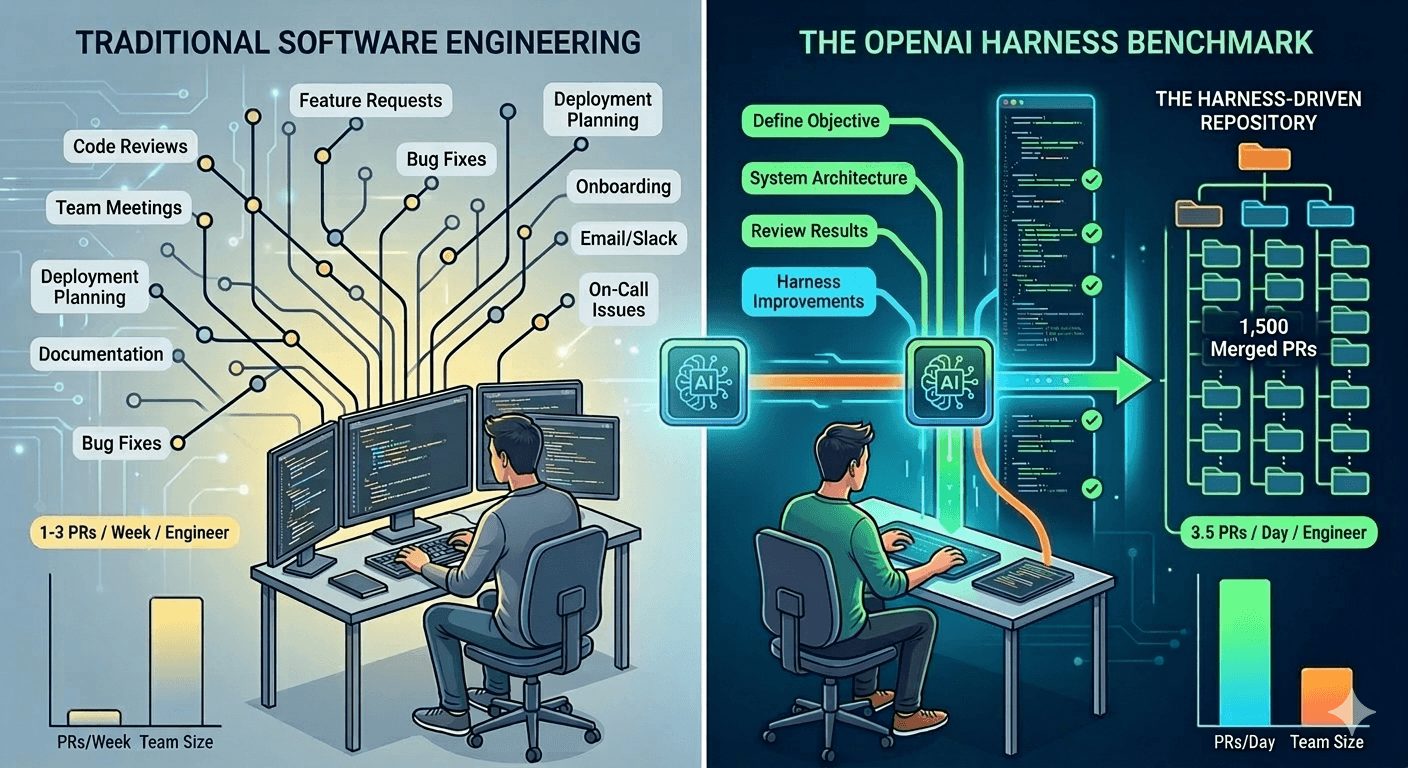

OpenAI's Harness team shipped ~1 million lines of production code with zero manual code — 1,500 PRs, 3.5 PRs/day/engineer, 90% time savings. Here's what it means for the future of software delivery.

In Part 1 of this series, I argued that code generation has become infinite and free, and that the real value has shifted to architectural oversight — building the harnesses that keep AI agents on the path. I introduced the metaphor of the horse and the harness: the AI is a powerful, high-speed horse, and without reins, fences, and a clear path, you're just hoping it knows the way home.

I expected the industry to catch up to this idea gradually. Then Ryan Lopopolo's team at OpenAI went and proved it in five months flat — with numbers that should make every engineering leader pay attention.

What Was the OpenAI Harness Experiment?

The OpenAI Harness experiment was a five-month effort led by Ryan Lopopolo to build and ship an internal software product using Codex agents to drive all development tasks. Not "AI-assisted" development. Not "copilot" development. Fully agent-driven development, with humans acting as architects and reviewers — never as typists.

The results? Roughly 1 million lines of production code. 1,500 merged pull requests. Zero manually written lines of code. An engineer throughput of 3.5 PRs per day. And an estimated 90% time savings versus traditional development.

Let me say that again: a team went from zero to a million lines of shipped, production-grade code, and not a single human typed a line of it.

The Numbers That Matter

Here's what the OpenAI Harness team actually delivered:

| Metric | Recorded Value |

|---|---|

| Experiment Duration | 5 Months |

| Total Lines of Code | ~1,000,000 |

| Human-Written Lines | 0 |

| Total Pull Requests Merged | ~1,500 |

| Engineer Throughput | 3.5 PRs / Day / Engineer |

| Estimated Time Savings | 90% vs. traditional development |

That throughput represents a schedule estimated at one-tenth the time required for traditional manual construction. Think about that. A team delivering at 10x the pace — not by working harder, but by fundamentally rethinking what the human's job is.

After 13 years of leading engineering teams, I've watched countless "productivity multiplier" claims come and go. This one is different because it isn't a tooling improvement bolted onto the same workflow. It's a different workflow entirely.

What Is Harness Engineering?

Harness Engineering is the discipline of building the automated infrastructure — tests, types, CI/CD pipelines, linting rules, documentation, and architectural constraints — that allows AI agents to operate with production-grade reliability without human hand-holding. Instead of writing code yourself, you design the environment in which agents write code safely. Think of it as engineering the reins, fences, and path for the horse, rather than pulling the cart yourself.

This is the core idea that the OpenAI team validated at scale.

How Does Harness-First Development Work?

Here's the part that really resonated with me. The OpenAI Harness team had a rule that sounds almost reckless until you understand the logic: if something failed, the human response was never to manually fix the code.

Never. Not once.

Instead, engineers asked a different question: What capability is missing from the harness that would allow the agent to solve this autonomously?

This is the opposite of how most teams use AI today. Most teams hit an agent failure and either "prompt harder" or just write the code themselves. That's vibe coding — you're back to being the horse instead of the harness designer.

Ryan Lopopolo's team forced themselves to build a self-healing environment. Every agent failure became a permanent improvement to the underlying infrastructure — a new tool, a specific guardrail, a more precise piece of documentation. The harness got smarter with every failure, which meant the same failure never happened twice.

As someone who manages engineers, this is the pattern I've been trying to articulate. The best teams I've worked with don't just fix bugs — they fix the system that allowed the bug. Harness Engineering takes that instinct and makes it the entire methodology.

How Does Harness Engineering Bypass Brooks's Law?

This, to me, is the most significant finding from the entire OpenAI experiment.

Brooks's Law states that adding manpower to a late software project makes it later — because communication overhead grows faster than productive output. It's been one of the iron laws of software engineering for fifty years. Every engineering leader I know has felt it in their bones.

In the OpenAI Harness experiment, as the team grew from three to seven members, throughput increased rather than plateauing. Let that sink in. They doubled the team and got more than double the output.

Why? Because the harness acts as the primary interface and system of record. The traditional communication bottlenecks between human developers —

"Hey, what's the status of that PR?"

"Wait, did you change the API contract?"

gets absorbed by the infrastructure. The agents handle the mechanical integration. The humans focus on strategic architectural decisions.

Brooks's Law assumes that the bottleneck is human-to-human coordination. When you shift to harness-first development, the bottleneck moves to human-to-harness design — and that scales linearly, not exponentially.

What This Means

"The competitive advantage of the future will not lie in having the 'best' model... but in having the best harness."

I keep returning to the horse metaphor from Part 1. Everyone in the industry is obsessing over which horse is fastest — GPT-5, Claude 4, Gemini Ultra. Ryan Lopopolo's team at OpenAI just demonstrated that the horse doesn't matter as much as the harness. They used Codex. They could have used any sufficiently capable model. The magic was in the infrastructure they built around it.

Here's what I'm telling my teams: stop optimizing your prompts. Start optimizing your environment. Build the CI pipelines that catch agent mistakes before they hit main. Write the type contracts that make invalid states unrepresentable. Design the architectural layers that tell the agent exactly where it's allowed to step.

The keyboard isn't just becoming obsolete — for Ryan Lopopolo's team, it already is obsolete. They shipped a million lines of code without touching it. The question isn't whether this model scales. The question is whether you're building the harness to take advantage of it.

This is Part 2 of the Harness Engineering series. Part 1: The Scarcity Inversion.