Why Modular AI Agent Architecture Outperforms Monolithic Tools for Complex Workflows

Modular AI agent architectures with Pi, Tallow, and TillDone outperform monolithic tools for complex migrations and multi-agent orchestration. Learn why control and context flexibility matter more than batteries-included polish.

You've seen me rave about Claude Code. Now let me show you what happens when you strip AI agents down to their bare essentials—and build back up on your own terms.

If you've followed my work, you know I've been deep in the Claude Code trenches. It's powerful. It's polished. And for 80% of my daily work, it's still my go-to.

But here's the uncomfortable truth I've been wrestling with - for the work that actually matters like complex migrations, the multi-day refactors and the projects that touch a dozen files, I keep hitting walls.

Not capability walls. Control walls.

So I started exploring what happens when you flip the paradigm entirely. When you stop asking "what can this tool do?" and start asking "what's the minimum I need to build exactly what I want?"

The answer led me to Pi.dev, a plugin called Tallow, and a workflow pattern named TillDone. And it's fundamentally changed how I think about AI engineering.

The Problem with "Batteries Included"

Here's something most AI coding tools don't want you to think about: that massive system prompt running behind the scenes.

Claude Code, like most polished AI agents, ships with system prompts that can exceed 10,000 tokens. These handle safety, formatting, tool logic, and dozens of edge cases. It's why the experience feels so seamless out of the box.

But context windows are a zero-sum game.

Every token spent on the system prompt is a token you can't use for your actual codebase. When you're working on a large-scale refactor and the AI needs to "see" multiple files simultaneously, this matters. A lot.

Pi takes a radically different approach: approximately 300 tokens for the core system prompt. Just the essentials — read, write, edit, and bash. That's it. Nothing more, nothing less.

The result? You get a massive head start on context. You can send more relevant code context without ballooning per-request costs. And you have room to breathe when the project gets complicated.

Tallow: What Modular Architecture Actually Looks Like

The argument for minimal cores only works if you can actually build on top of them. That's where Tallow comes in.

Built as a plugin on top of Pi, Tallow demonstrates something important: you don't need a monolithic application to get high-end features. It achieves near-parity with "batteries-included" tools while remaining incredibly flexible.

The killer features that sold me:

Multi-Model Routing

You're not locked into one provider. In a single session, you can use Claude 3.5 Sonnet for deep reasoning during the planning phase, switch to GPT-4o for rapid iteration, and then use DeepSeek for cost-effective heavy lifting.

Think about that for a second. You're not just using AI—you're orchestrating AI.

Ephemeral Sub-Agents

You can spawn specialized workers for specific sub-tasks. One agent handles documentation while another focuses on core implementation. This eliminates "context bleed"—that frustrating phenomenon where your main engineering thread gets confused by unrelated work.

TillDone: A State-Driven Way of Working

This is where things get genuinely interesting—and I want to be precise about what TillDone actually is, because I've seen it mislabeled before (including by me).

TillDone is not a framework. It's not a library you import or a rigid system you wire up. Pioneered by developers like IndyDevDan in the "Tactical Agentic Coding" community, it's a task-discipline pattern—a structured methodology for managing complex, long-running engineering tasks that you bring to your agent, not the other way around.

Most AI agents operate in a simple loop: ask, act, repeat. They're reactive. And halfway through a complex project, they often lose the thread or hallucinate progress. In a standard chat interface, an AI might tell you it's "done" as soon as it writes a block of code. TillDone defines "done" differently.

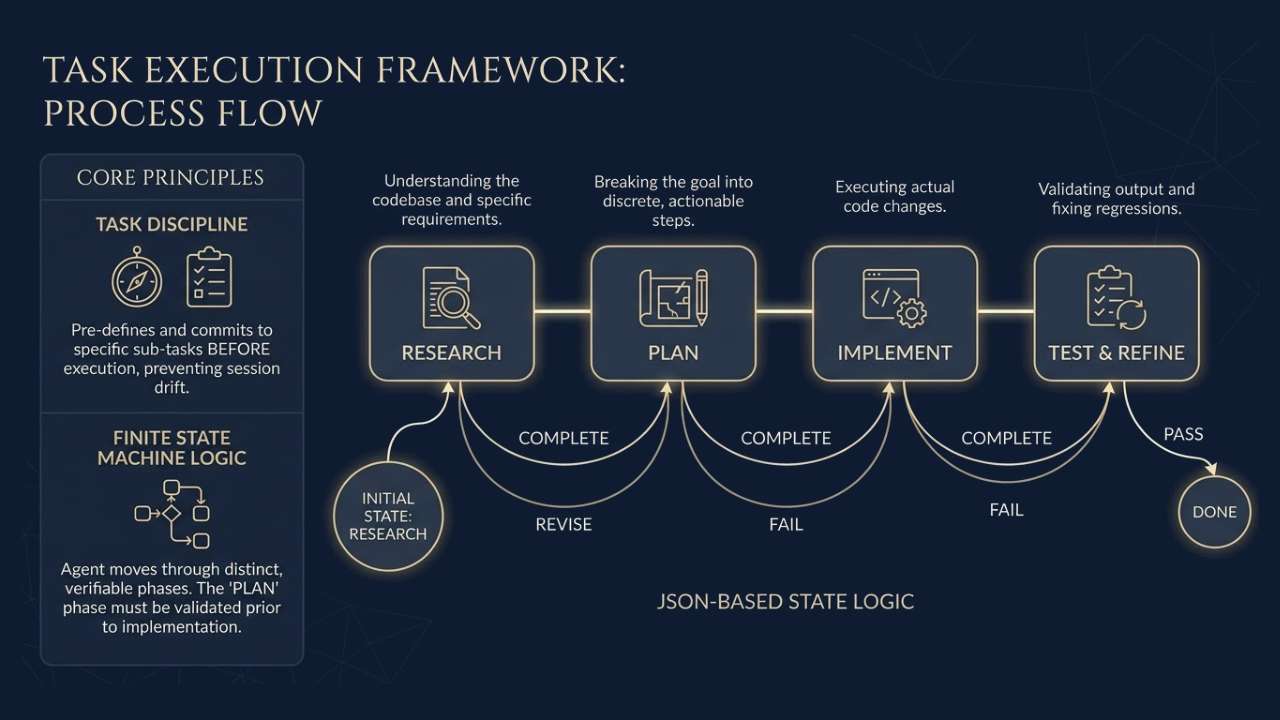

The Core Principles

Task Discipline

The agent must explicitly define and commit to a set of sub-tasks before execution begins. This single constraint prevents the "drifting" that kills complex sessions—where the agent slowly loses sight of the original goal across dozens of turns.

Finite State Machine Logic

The agent moves through distinct, verifiable phases. It cannot proceed to implementation until the "Plan" state is validated:

- Research — Understanding the codebase and specific requirements

- Plan — Breaking the goal into discrete, actionable steps

- Implement — Executing the actual code changes

- Test & Refine — Validating output and fixing regressions

Here's what that looks like as state logic:

{

"initial": "research",

"states": {

"research": {

"on": { "COMPLETE": "plan" }

},

"plan": {

"on": { "COMPLETE": "implement", "REVISE": "research" }

},

"implement": {

"on": { "COMPLETE": "test", "FAIL": "plan" }

},

"test": {

"on": { "PASS": "done", "FAIL": "implement" }

}

}

}

Persistence Without Lock-in

Because the state is tracked independently—often in a simple JSON or markdown file—the agent can pause, resume, or even switch models mid-session without losing context or progress. It knows exactly where it left off. If it hits a rate limit or you need to step away, the mission doesn't evaporate.

The Context Guardrail

By anchoring the agent's attention to the current state of a specific task, TillDone minimizes "context bleed"—the tendency for an agent to get confused by previous, irrelevant turns in a long conversation. Your main engineering thread stays clean.

"Done" only occurs when the state machine reaches its final exit state—after automated tests have passed and the output has been verified against the initial plan. Not "done enough." Done.

This approach is specifically designed for developers who want to move beyond using AI as a snippet generator and toward using it as an autonomous engineer capable of handling multi-file refactors and complex migrations. And the fact that it's a methodology rather than a library is precisely what makes it so portable—you can apply this pattern to Pi, to Claude Code, or to any agent you're working with.

The Honest Comparison

Let me lay out exactly how this stack compares to what I've been using:

| Feature | Claude Code (Monolithic) | Pi + Tallow (Modular) |

|---|---|---|

| System Prompt Size | ~10k+ tokens | ~300 tokens |

| Model Flexibility | Anthropic only | Model-agnostic (Claude, GPT, DeepSeek) |

| Workflow Logic | Linear, session-based | State-machine pattern (TillDone) |

| Customization | Opinionated, limited | TypeScript-based extensions |

| Multi-Agent Support | Limited | Native sub-agent spawning |

I'm not saying Pi + Tallow is better for everything. Claude Code wins for quick tasks, standard daily work, and when you just want something that works without thinking about it.

But for the 20% of work that requires deep customization? The complex migrations? The multi-agent orchestration? This modular stack is in a different league.

How to Actually Use This

If you're managing complex environments—implementing merge freezes on GitHub, migrating to React Router 7, upgrading to Tailwind CSS v4—this level of control isn't a nice-to-have. It's a necessity.

Here's my playbook:

Build Custom Tooling

If you have a unique CI/CD pipeline or specific architectural patterns, write a Pi extension in TypeScript. Instead of explaining your setup to the AI every time, give it a native tool to understand your environment.

Here's a real example—a tool for checking GitHub merge freeze status:

// extensions/merge-freeze.ts

import { Tool } from '@pi/sdk';

export const checkMergeFreeze = new Tool({

name: 'check_merge_freeze',

description: 'Checks if the current repository has an active merge freeze.',

execute: async () => {

const isFrozen = await getFreezeStatus();

return isFrozen ? "REJECTED: Merge freeze in effect." : "PROCEED: No active freeze.";

}

});

Use Strategic Model Switching

Don't treat all AI tasks the same. Use a heavy reasoning model (Claude Sonnet, GPT-4) for the "Plan" phase where architecture decisions happen. Then switch to a faster, cheaper model for implementing boilerplate code.

This isn't about being cheap. It's about being smart with resources so you can afford to send more context when it matters.

Adopt the Hybrid Strategy

Use Claude Code for standard daily tasks—80% of your work where polish and ease of use matter most.

But switch to Pi and the TillDone pattern for that other 20%: deep customization, multi-agent orchestration, complex workflows that span multiple sessions.

What This Really Means

We're moving away from asking AI to "write a function" and toward asking it to "manage a workflow."

This is a bigger shift than it sounds. It's the difference between having a capable assistant and building a tailored engineering organization inside your terminal.

Tools like Pi and plugins like Tallow aren't just alternatives to Claude Code. And patterns like TillDone aren't frameworks you adopt — they're a way of thinking about AI workflows that you can bring to any stack.

For those of you who've been following along on my Claude Code journey: I'm not abandoning ship. But I am expanding the toolkit.

And honestly? I think you should consider doing the same.

Have you experimented with modular AI tooling? Hit me up—I'd love to hear what's working for you.