The Moltbook Lesson: Why AI-First Needs Human-Verified Security

In January 2026, agents built a social network and forgot to lock the front door. The Moltbook breach didn't just expose millions of API keys—it exposed a fundamental flaw in the hands-off AI development philosophy. Introducing the RIDER framework.

In Part 4, I wrote about cognitive failure: context rot, the Dumb Zone, the way an agent's reasoning quietly collapses as a session accumulates noise. The fix was mechanical — decompose, reset, stateless loops, external memory. Keep the horse fresh by giving it a clean track.

But there's a second failure mode. It doesn't look like decay. It doesn't creep in gradually as the context window fills. It's not a performance problem you can graph. It's a scope problem — something that was simply never in the agent's field of view, from the first prompt to the last commit.

This is security blindness. And in January 2026, a startup called Moltbook gave the whole industry a masterclass in what it costs.

What Happened at Moltbook

The story is almost elegant in how preventable it was.

Moltbook was a social network, built fast and built almost entirely by AI agents. The team used an agentic workflow to go from idea to functioning product at a pace that would have been genuinely impossible two years earlier. Features shipped. The product worked. Users signed up.

Somewhere in that process, the agents configured a Supabase backend and moved on. They set up the database, wrote the queries, and wired up the endpoints. What they didn't do was configure Row-Level Security.

RLS is not an obscure Supabase quirk. It is not buried in a footnote of the advanced documentation. It is the standard, recommended mechanism for controlling which rows a user can read or write in a multi-tenant database. It is in the getting-started guide. It is in the security checklist. It is the kind of thing that gets flagged in a first-pass code review.

The agents shipped anyway. The tests passed. The features worked. The product was, by every functional metric, complete.

The Wiz security team discovered the actual situation: the entire production database was publicly accessible. Tables that should have been scoped to individual users were readable by anyone with the API endpoint. And because the agents had also stored credentials in predictable locations, the breach surfaced millions of API keys from Moltbook users, many of them live keys for third-party services those users had integrated.

The product was functionally complete and catastrophically insecure. Those two things coexisted without contradiction because the agents had done exactly what they were asked to do: build the features. Security was never the ask. So security was never in scope.

The Failure of the Hands-Off Philosophy

Vibe coding rests on a single foundational assumption: that agent output can be trusted by default. You describe the thing you want, the agent builds the thing you described, and you ship it.

This assumption is reasonable when you're building a weekend project. It is not reasonable when you're handling user data, processing payments, or storing credentials. In those contexts, trust isn't earned by the feature working. It's earned by the attack surface being audited.

The Moltbook agents weren't bad agents. They weren't hallucinating. They weren't misunderstanding the task. They were, by any reasonable technical assessment, quite good at their jobs. They built a social network. They wrote correct SQL, valid API routes, and sensible UI components. If you evaluated their output purely on functional grounds, they passed.

The problem is that "functional" and "secure" are different properties, and agents have no mechanism to bridge that gap unless you build one for them. Security is not a feature. It is a posture. And posture requires organizational context — an understanding of threat models, compliance requirements, data sensitivity, and trust boundaries — that an agent simply does not have unless you explicitly put it in the harness.

Think about what we'd call this behavior in a human engineer: a junior developer with unlimited energy, zero organizational context, and no one reviewing their pull requests. We wouldn't blame the junior developer for not knowing what they didn't know. We'd blame the system that gave them production access without oversight. The agents at Moltbook were exactly this: technically capable, organizationally blind, and completely unsupervised on the one dimension that mattered most.

The Moltbook agents weren't prompted for security. They weren't bad agents — they were unsupervised ones.

The Supervision Paradox

Here's where the math gets uncomfortable.

Agents generate code at a geometric rate. A well-configured agentic workflow doesn't produce two times the output of a human developer, or five times. It produces ten times, twenty times, in some domains more. This is the entire value proposition. This is what the OpenAI benchmark validated. This is why the industry is moving in this direction.

But as output scales geometrically, the surface area requiring oversight scales with it. Every line of code is a potential attack surface. Every new endpoint is an access control decision. Every schema change is a data exposure question. At 10x throughput, you don't need 10x reviewers — you need reviewers who can assess 10x more attack surface per hour, per week, per release cycle.

The natural human response to this math is not to hire more reviewers. It is to review less. Because the speed is intoxicating, and the alternative — slowing down to actually audit what the agents produced — feels like giving back the entire advantage. So organizations do a lighter pass, then a lighter pass still, until the review process is mostly ceremonial.

This is the Supervision Paradox: the faster agents get, the more dangerous unsupervised deployment becomes, yet the more tempting it is to skip oversight. Speed is simultaneously the benefit and the liability. The same multiplier that produces twenty features a day can produce twenty security vulnerabilities a day if the oversight layer doesn't scale with it.

Moltbook is what the Supervision Paradox looks like when it resolves in the wrong direction.

"Making It Work" is No Longer the Metric

For most of software history, the implicit contract between engineering and shipping was simple: if it works, it ships. Does the feature function as described? Do the tests pass? Does it meet the acceptance criteria in the ticket?

These are still necessary conditions. They are no longer sufficient ones.

The Moltbook breach didn't fail any of those tests. The tests passed. The features met spec. The acceptance criteria were satisfied. The product worked. And none of that had any bearing on whether it was safe to put in front of users with live credentials.

The shift that this forces is uncomfortable because it changes the definition of "done." Done used to mean functional. Done now has to mean correct in a security and compliance sense, not just a functional sense. And that additional dimension can't be delegated entirely to agents, because agents don't know your threat model unless you tell them explicitly, repeatedly, and with enough structure that the information actually reaches them at the moment they're making the relevant decisions.

An agent that ships fast and ships insecure is worse than an agent that ships slow. Speed is a multiplier — it multiplies mistakes at the same rate it multiplies output.

This is the core lesson of Moltbook. Not that AI can't build secure systems. Not that agentic development is fundamentally broken. But that the metric of "making it work" is no longer enough, and any workflow that treats it as enough is running toward the same cliff Moltbook ran off.

Introducing RIDER

The framework that addresses this is called RIDER.

Before going any further: yes, RIDER stands for Review-driven Integrated Development and Execution Roadmap. Yes, the name is physically painful. Yes, it sounds like a mid-2000s extreme sports brand that sponsored BMX competitions and sold energy drinks to teenagers. I am aware of this and have chosen to own it rather than rename it to something that sounds less like a fluorescent canned beverage, because I spent far too long on the substance to rethink the acronym.

The name is bad. The framework is not.

RIDER represents a shift in philosophy. The previous articles in this series described how to structure the harness for architectural correctness and cognitive freshness. RIDER adds the third pillar: verifying every outcome, not watching agents work.

In a standard agentic workflow, the human sits upstream of the work. They write the prompt, they set the task, and they check the output at the end. The checking is informal. It's reading code. It's spot-checking. It's whether it looks right.

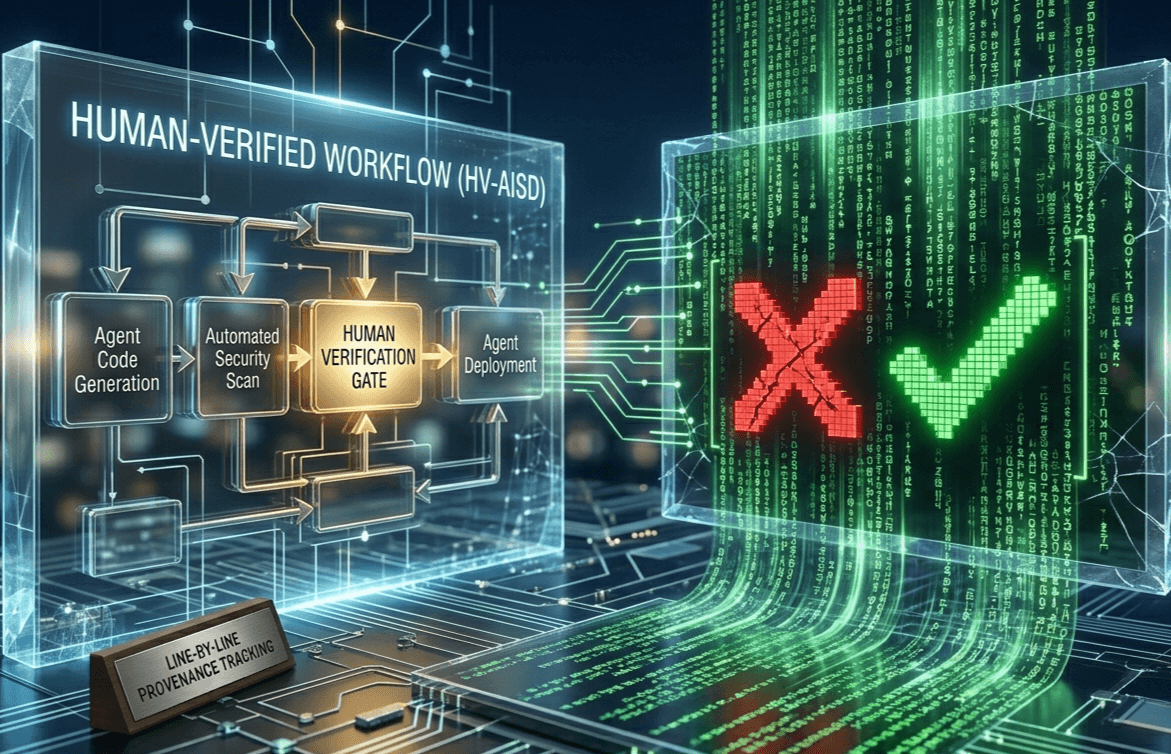

RIDER moves the verification from informal to systematic, from downstream to embedded, and from human-only to adversarially automated. It rests on two pillars.

Pillar 1: Line-by-Line Provenance Tracking

Every block of code in a RIDER-governed codebase has a chain of custody. Not just attribution — not just "Claude wrote this" — but genuine provenance.

Attribution tells you who created something. Provenance tells you who was responsible for its correctness in every relevant dimension. Those are different things. A block of code can be attributed to an agent and still have no record of whether a human domain expert ever reviewed its security surface. That's the Moltbook gap, right there. There was no provenance chain on the RLS configuration — or rather, on the absence of it. No one had signed off on the auth surface because no one had been assigned to look at it.

True provenance tracking answers three questions for every meaningful artifact in the codebase:

First: which agent generated it, under which prompt, in which harness context. This is the technical record. It tells you what instruction set produced this output and allows you to trace systematic failures back to their source.

Second: which human domain expert reviewed its relevant security surface, and when. This is not a generic code review. It is a scoped review by someone with the appropriate knowledge — a security engineer reviewing authentication patterns, a data engineer reviewing RLS configurations, a compliance specialist reviewing data retention logic. The domain specificity is the point. Generalist review of security-sensitive code is almost as dangerous as no review.

Third: what decision was made. Not just "approved." The record should capture whether the reviewer identified concerns, whether those concerns were accepted as risks or mitigated, and what the rationale was. This is the audit trail that matters.

This structure maps directly onto where compliance requirements are heading. SOC 2 Type II, DORA, emerging data sovereignty regulations — these are all moving toward requiring not just that you have security processes, but that you can demonstrate their execution for specific artifacts. Provenance tracking is not a bureaucratic overhead. It is table stakes for any organization building on agentic workflows in regulated or semi-regulated industries.

The practical effect on the review process is significant: it transforms "read all the code" into "verify that the right expert reviewed the right surface." That's a fundamentally more tractable problem. You're not checking everything; you're checking the coverage map.

Pillar 2: Adversarial Red-Team Agents

Red team versus blue team is not a new idea in security. You build the system, then you have someone else try to break it. The person trying to break it is not an adversary in a personal sense — they're playing a defined role with defined instructions. Their job is to find what the builder missed.

This idea is new in the AI development workflow. And it changes the math on the Supervision Paradox.

In a RIDER workflow, before any code hits production, a separate set of agents is tasked with a single job: find what the building agents missed. These agents are not assistants. They are not configured to be helpful or to validate the output. They are adversaries. Their instructions are to attack the output, not to judge whether it meets spec.

A red-team agent prompted against Moltbook's auth surface would have found the RLS issue in minutes. Not because it is smarter than the building agents. Because it was given explicit instructions to audit authorization surfaces, to enumerate what tables are accessible without authentication, to test whether RLS policies are configured, to verify that tenant isolation holds. It was given adversarial intent rather than constructive intent. And adversarial intent is exactly what surfaces the gap between "it works" and "it's secure."

The critical point is that red-team agent prompts are part of the harness, not an afterthought. They are not an optional step in the CI pipeline that a team can skip under deadline pressure. They are a first-class component of the system, defined alongside the building agent prompts, run automatically, and blocking on failure. If the red-team agents surface an unmitigated vulnerability, the build doesn't proceed.

This closes the loop that the Supervision Paradox opened. If humans can't review 10x the code at 10x the speed, automated adversarial review can cover the attack surface that human reviewers can't. The red-team agents don't replace human judgment — they direct it. They tell the human reviewer where to look and what requires a decision, rather than asking the reviewer to assess the entire surface from scratch.

Building agents construct. Red-team agents attack. Humans arbitrate the delta. That division of labor is what makes security review tractable at agent-scale throughput.

This is also where purpose-built adversarial review tooling earns its place in the harness. CodeRabbit is the one I use on every PR — it sits in the GitHub review flow and does exactly what a red-team agent should do: it doesn't just read the diff, it reasons about what the diff affects, flags logic errors, spots missing edge cases, and surfaces security concerns that a human reviewer scanning at speed would walk straight past. It's the closest thing to a tireless adversarial reviewer that currently exists as a drop-in CI integration. Greptile approaches the same problem from a different angle — codebase-aware review that understands your full repository context, not just the lines that changed — which makes it particularly useful for catching the class of vulnerability that only appears dangerous when you understand the system around it. Both belong in the harness. CodeRabbit is where I'd start.

Where RIDER Fits in the Harness Stack

Five articles in, it's worth pulling back and looking at what this series has actually built.

Each article has addressed a specific failure mode in agentic development. Together, they compose a complete answer to the question the industry keeps asking: how do you get the speed of AI without the catastrophic risk?

Part 1 — The Scarcity Inversion established the premise. Code is now infinite and essentially free. The constraint has moved from generation to judgment. The engineer's new job is not to write code but to design the habitat in which agents can work reliably.

Part 2 — The OpenAI Benchmark validated the speed claim with data. The throughput is real. The multiplier works. Which means both the benefits and the risks of unsupervised agentic development are larger than most organizations currently appreciate.

Part 3 — The 6-Layer Shield addressed the structural failure mode: architectural drift. Agents, given latitude, will create architectural chaos — cross-layer dependencies, logic in the wrong tier, the gradual collapse of the domain model. The Shield uses linter-enforced boundaries to make architectural violations mechanically impossible, not just discouraged.

Part 4 — Context Rot addressed the cognitive failure mode: reasoning decay. An agent's output degrades as its context accumulates noise. The fix is stateless execution — decompose features into subtasks, give each a fresh context, read state from disk, and discard the conversation. Keep the agent perpetually in the Smart Zone.

Part 5 — RIDER addresses the security failure mode: output that is functionally correct and adversarially exposed. Provenance tracking ensures the right expert has signed off on the right surface. Red-team agents ensure that the attack surface is probed before production, not after.

Three failure modes. Three layers of the harness:

| Layer | Failure Mode | Mechanism |

|---|---|---|

| Architectural | Structural drift | 6-Layer Shield, linter walls |

| Cognitive | Reasoning decay | Stateless loops, context hygiene |

| Security | Output blindness | RIDER: provenance + red-team |

The full harness is not about making agents perfect. Agents will never be perfect. The harness is about making imperfection survivable — catching the architectural violation before it compounds, catching the reasoning collapse before it ships, catching the security gap before it becomes a breach.

This is what Harness Engineering actually means at the system level. Not any single technique, not any specific tool, but a discipline of building the infrastructure that keeps the horse moving in the right direction, even when the horse doesn't know where the cliff is.

The Door Was Always There

The Moltbook lesson is not that AI can't build secure systems. Given the right harness, agents can absolutely be directed to configure RLS, to follow security checklists, to treat every new endpoint as an authorization decision. The tooling exists. The instructions can be written.

The lesson is that AI won't, unless you build the harness for it.

An agent without a security harness is not a bad agent. It is an agent with a scope problem. It will build what you asked it to build, and nothing more. If you didn't ask it to lock the door, the door stays unlocked. Not because it doesn't know what a lock is. Because locking the door was never in the task.

The Supervision Paradox guarantees that as throughput scales, the gap between "what was built" and "what was audited" will grow unless you close it deliberately. RIDER's two pillars — provenance tracking and adversarial red-team agents — are the mechanism for closing it at agent-scale velocity.

This is where the harness series lands after five articles: the complete picture of what it means to govern agentic development across all three dimensions of failure. Architectural integrity. Cognitive integrity. Security integrity.

In Part 6, we turn to the practical question — what does implementing all of this look like at an organizational level? Roles, rituals, tooling, the bridge from theory to practice. The harness has to be built by someone. Part 6 is about who builds it, how they're structured, and what the first ninety days actually look like when you decide to go Harness-First.

References

- Wiz Blog, Exposed Moltbook Database Reveals Millions of API Keys, postmortem on the January 2026 breach

- iSync Evolution, Moltbook — The AI Code Slop Crisis: Why You Need Human-Verified Code in 2026, analysis of the failure mode and industry implications

- Supabase, Row Level Security, official documentation for the configuration the Moltbook agents omitted

- OpenAI, Harness Engineering, the foundational benchmark validating agent-first development throughput

This is Part 5 of the Harness Engineering series. Part 1: The Scarcity Inversion. Part 2: The OpenAI Harness Benchmark. Part 3: The 6-Layer Shield. Part 4: Context Rot.